How We Built This

Zero lines of hand-written code, three weeks, 130 tickets. I've built and launched the website and the back-office for the conference in three weeks using AI, and not "coding" more than a couple of hours a day, between meetings. Given the context of the Startup Day conference, I thought the attendees and the community would appreciate knowing what it actually took to build it, what didn't work so well, and what I'd do differently.

Let me start by saying that I wrote this article using old-school typing, no AI.

In this article, I cover not only the code behind the website; I'll talk about design/UX, marketing & copy, content, integration, DevOps, managing tasks, and even some basic GTM.

This article is quite technical and in-depth, so brace yourself.

I've been writing software for over 30 years. If you are not a software engineer or you don't have the domain expertise I have, your mileage with AI will vary.

Ground Rules: Zero Lines of Code + Minimum Dependency

I didn't write a single line of code. That was on purpose. In many situations, it would have been much faster and easier for me simply to open the code, make the changes that I wanted, and be done with it. I forced myself not to touch the code so I could practice the new-new way of building products.

In fact, I barely wrote copy for the website as well. Almost every piece of text, from navigation to sentences to paragraphs (not this article, though), was completely written by Claude Code (Claude Code, not Claude).

My second decision, besides not writing code, was to simplify this project as much as possible. This is a conference, and this website has an "expiration date." There is no point in creating a scalable and sophisticated project with complex integrations. I minimized the number of SaaS services or dependencies I used to build and operate the site.

The AI Setup

For this project, I used only Claude for coding. I have four basic setups: 1) A project on Claude Desktop called "Startup Day" focused on the non-technical aspects of the conference (strategy, positioning, pricing, GTM, etc.). 2) A project on Claude Desktop called "Startup Day-Tech" for me to discuss possible solutions. 3) Claude Code on VS Code working on the project repo. 4) A repo called "SeattleFlow-Biz" that I used for some GTM activities (using Claude Code, not Cowork). I really didn't need the Claude Desktop project for the tech stuff, and I could have just used Claude Code for those discussions, but somehow my brain likes shifting between those windows.

The Tech Stack

I started by debating solutions with Claude ("Startup Day-Tech") about deployment, VMs, VPS, AWS/Azure, different vendors for databases, email, text messages, payments, etc. It wasn't simply "tell me what's best" or "tell me what I should use," I would explain my strategy, my goals, my skills, etc. Often, Claude would respond with "since you already know about X service, just use that."

Here's what I'm using: Azure Web App (compute), PostgreSQL (database), ASP.NET Core, C#/.NET 10, Bootstrap (CSS/components), HTMX (dynamic rendering), Stripe (payments), Postmark (transactional emails), Loops (campaign emails), and Plausible (analytics).

One of the key unlocks to simplifying my system was discovering Hangfire, which is a poor-man's queue/scheduling system built on top of PostgreSQL. I hate managing infrastructure, and each piece that you add to your infra exponentially increases the complexity of maintaining it, extending it, and figuring out what broke. If you've built even a medium-sized application, you know how complex queues/notifications/scheduling services can be and what a hassle it is to investigate why something didn't happen (or happened twice).

The Goal

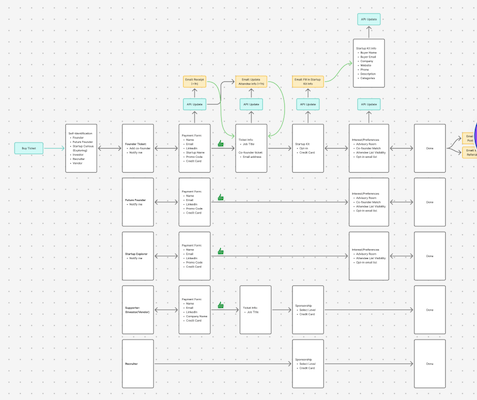

I have used many services to manage events/conferences, including Eventbrite and Luma. Luma is great for meetups and simple event setups, but I wanted something more sophisticated in terms of registration flow, ticket management, etc. We have different stakeholders, tickets that give access to the full conference or Startup Fair-only, complexity around the Advisory Room and Co-founder Matching, and many nuances that Luma and Eventbrite couldn't address. I am also not a big fan of disconnected experiences. Building a conference website and asking people to go somewhere else to buy tickets is not ideal.

Plus, I wanted to build this. Why not?!

The website has everything that you see on public pages. It also has several background jobs for email and SMS, from order receipts to pre-event messages. It has an admin page with roles for me and staff to manage orders, attendees, registration, advisory room, and more. It's fully integrated with Stripe, email, and other services that I can monitor.

There are other special pages/services to help GTM and event promotion, including auto-generated assets of speakers, sponsors, advisors, etc.

The Design

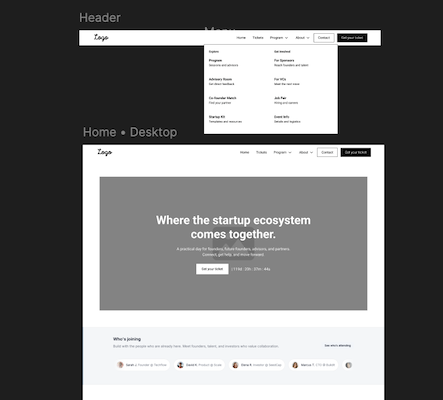

I don't like designs created by AI. I also don't like text created by AI. It's not the slop that bothers me, it's the poor quality -- some people would say these are the same thing. It'll get better, but right now it's just below my bar on the standards of what I want to deliver. I've played with Replit, Lovable, and Google Stitch, and the results are always undifferentiated-purply-overly-designed designs.

I hired a (human) designer. Over a two-week period, we worked on wireframes, design systems, UX flows, and got to a result I was happy with. Figma was our tool of choice. We didn't use Figma's AI tools (Figma Make).

The Kick-off

It has become a standard for me to start every new coding project with a single document I call SPEC.md. It's a terrible name because it's not a spec or a PRD. It's more of a strategic document (think of a mini-PRFAQ) where I explain the vision, the goals, things that I consider important, things that I don't want, etc. This time, I simply wrote about the vision of the conference, the program content, the information about how the advisory room works (from an attendee and advisor perspective), who the conference was for, etc.

I included a section about important dates, ticket prices, social media and marketing strategy, etc.

Then, I spent days going back-and-forth with Claude Code refining this document. Claude would often find inconsistencies in my story and ask to clarify it, then update the doc accordingly. Once we had a good understanding on that aspect, I asked Claude to break down the pages we would need, our database schema, all the emails and notifications the system would send out, etc. Again, I'd do several rounds of reviews and correct any misunderstandings.

Remember when I said I wanted to minimize external dependencies? Yeah, I didn't even want to use any issue-tracking system (Linear, Jira, Notion). I wanted everything stored in the repository. So, I created a folder called TASKS, and created a Claude Skill (/task) that would add new tasks (yaml + markdown). The first batch of tasks came directly from the SPEC.md file. I told Claude to create tickets in a few stages. It generated roughly 50 tasks. Over the following weeks, we've got to 130 tasks, which included features, bugs, and tasks (e.g., setting up an external service).

I also created an AGENTS.md/CLAUDE.md (symlink'd). I was very explicit about my tech stack, including the exact version number and a link to the documentation. I included what I wanted and didn't want in terms of architecture, code design/style, database (e.g., no hard deletes), security, logging, APIs, frontend, infrastructure, testing, and more. In total, it has 193 lines (3,300 tokens).

I also added two other skills: Review Ticket (/review-ticket) and Review Work (/review-work).

I would open ticket 001, update the Description section, and then invoke the /review-ticket skill, which would ask me questions to expand my description and create acceptance criteria, UX, content, API, DB schema, notifications, jobs, admin portal, etc. The ticket would get updated with this information, and I would review it manually again. This worked surprisingly well, since after a single round of revision, the ticket was ready for implementation. I would simply tell Claude, "Implement ticket 001," and it would take anywhere from one to five minutes to get the job done.

I didn't use branches or worktrees, so I didn't have to deal with merges and conflicts. Everything was on the main branch. Every once in a while, I knew two tickets were far enough in the code that I would kick off two agents working in parallel.

Initially, I would review the code. One thing about Claude Code is that once it has reference code to look at, it uses similar patterns for future code. It's a blessing and a curse (topic for another post). So, I'd review code and simplify things by telling it how to refactor what it just did.

One thing that made a bigger difference than I expected was a simple line that I added to the AGENTS.md: "Don't over-engineer. This site lives for ~6 months." It was surprising how often this came up when Claude decided how to build features. It "got it". My guess is that without that line I would end up with 30% more code, a highly scalable solution for future conferences or to serve tens of thousands of attendees. None of that mattered, and it would have been a liability to this project.

Reviewing Work

As I mentioned, for the first 15-20 tickets, I reviewed everything. Once, I caught a major vulnerability in authentication, and when I pointed that out to Claude, it simply said, "You're right, anyone can impersonate another person." Argh.

But before I did my manual review, I simply invoked my /review-work skill. It scanned the files that had changed, looked for duplicate or unused code, built and ran unit tests, refactored code, did a security review, checked that input validation on the client and the server were being done (for forms POST and API), and added items to SANITY.md for me to do manual verification later.

This skill also provided me with a report at the end of any issues found, any risks, how I should review the code, and updated the corresponding ticket for future reference.

The SANITY.md didn't work. Claude was too prolific in adding things in there, and I ended up with 3,000 manual tests that it wanted me to do. Later, I implemented another skill to try to validate those using the Playwright MCP, which cut those in half.

Video, Images, and Content

I've got a few photos from the venue they sent to me. These are marketing photos for event organizers to see what the space looks like when they are set up. They were great pictures, but they included branding or styling of other organizations. Nano Banana to the rescue! I uploaded the images to Gemini and asked for updates that included my conference information, branding, attendees, etc. The results were great.

Then I tried something different. I uploaded the images and asked for videos of the conference. This was harder than I expected. I had never used AI to generate videos, and I learned that you have to be specific in terms of lighting, camera motion, scene setup, etc. I used both Gemini and Adobe Firefly. I generated ten 8-second videos. Half were nearly useless. The other half had obvious AI mistakes, but subtle ones. I used Adobe Premiere to edit mistakes out, create transitions, and apply effects. The result was good, and it's the hero video on the homepage.

Remember SPEC.md? I had a lot of language in there about the conference, the positioning, the value prop for attendees, sponsors, speakers, Startup Fair presenters, etc. Again, it turned out to be even more important than I thought when Claude was working on my tickets. I asked to create placeholder text that I would edit later. Over 90% of the time, the text was so aligned with the messaging framework that I didn't have to do anything. Claude Code created the Visit Seattle page in under two minutes, all from scratch, with barely any direction from me. After it was done, I asked to add or remove a few items, but I didn't provide links, copy, icons, or anything.

From Figma to CSS

I had a misfire with Tailwind. I had never used Tailwind CSS before, but I heard the cool kids were using it, so I thought a limited project like this would be a great way to learn. Maybe I'm not smart enough (or cool enough), but I hated it. It generated incredibly bloated HTML that I felt was hard to maintain. Claude was writing it, so I shouldn't care, but every time I asked Claude to create something it would push back and say that we would need to do X, Y, and Z to do that, and in the back of my mind I was thinking it's just one class in Bootstrap.

One thing that LLMs are fantastic at is replacing A with B. So, after we had a few pages built, I told it to replace Tailwind with Bootstrap. Since I'm familiar with that framework, I asked Claude to create a design system page first. Then, I provided exports from Figma and told it to modify our CSS to match them. Honestly, this was the moment my jaw dropped. It did a superb job, even with subtle animations and transitions. I didn't use Figma MCP for this because I thought it wouldn't do a good job, given that the Figma file the designer and I had worked on was a tad messy and I didn't think Claude would get it.

This is where actually knowing HTML, CSS, and JavaScript helped. I provided clear directions to Claude about potential problems with margin/padding, borders, z-index, flexbox, etc. I think someone without this knowledge could still achieve the same result, but it will take longer.

Over-engineering

This is a PITA. Codex, Claude, GitHub Copilot, and Gemini love to show how good they are with (coding) design patterns and create these beautiful classes with dependency injection, abstractions, interfaces, and everything else. Sometimes that's what you want, but not all the time. Senior engineers know when to go with the single-class utility approach versus the service/container/abstraction approach. The LLMs have poor judgement about these decisions.

One example for me was the list of speakers. The ticket was to show four speakers on the homepage (profile image, name, company), and have a page with the full list of speakers, including bio and links to their social media accounts. I should have been more specific, but Claude decided not only to create a table in the database but also to create eight classes, an admin interface, and a lot of bells and whistles. Initially, I thought, great. I didn't have to do anything, just enter their information. Then I realized it would take longer for me to update the speakers' information on the admin interface than simply having a JSON file in the project. For a single conference website, the list of speakers is like static content.

Infra & Deployment

I'm so lazy I prefer to spend an hour copying and pasting information from one place to another instead of spending eight hours automating it. That's the anti-software engineer motto. Navigating AWS or Azure (or GCP) management console gives me anxiety. I know enough to click around and find stuff out, not enough to automate anything.

What I did this time around is build everything on my computer (about 50 tickets) and when the website was nearly done, I asked Claude Code to create a file called DEPLOY.md, with every step to deploy every service that we needed. The basic instructions I gave were for Claude to imagine I know nothing and that I'm setting everything up from scratch. The very first instruction on the file was to go to the Azure portal and create an account. The DEPLOY.md file had 12 sections, each with 3-10 subsections, and each subsection with 1-5 steps of what to do. There were about 100 steps in total.

There were a few steps that required me to click on websites, such as getting the Stripe or Postmark API key, or applying for a phone number for SMS on Azure. The rest was all CLI commands. It was good, but not flawless.

One problem with LLMs is the stagnation of knowledge. The training data is often 6 to 12 months behind when you are using it. This matters when languages, frameworks, APIs, and CLIs change -- and they do change often.

What I did was to run each step on DEPLOY.md in bash, and if something didn't seem right (a warning or error), I'd paste on Claude Code and it would investigate, update the DEPLOY.md, and ask me to run it again. It ran close to 100 command lines and only had a handful of problems.

Again, I know enough about software engineering to avoid the most egregious mistakes a junior person might make when setting up infrastructure, like not securing Blob/S3, storing API keys in insecure locations, etc. That helps. Also, DNS and SSL. It's amazing how "simple" these technologies are and how much of a pain they can cause. Twice, I had to stop everything and wait a couple of hours because of a minor issue that required propagation.

After all the infra was set up, I asked Claude to create a deployment script for me to run every time I wanted to update the website. No CI/CD or GitHub Actions. From dev directly to prod. It worked like a charm.

Security

I assure you, LLMs suck at security. It's incredible how often they will write sophisticated code with CSRF, and the next class allows anyone to get a row of the database via an API without authentication just by enumerating the ID. Argh. My /review-work skill had specific details on how to verify security. The problem is that LLMs don't have a holistic view of threat assessment. I'm sure there are products out there that help with that, but it wasn't worth for me to invest in investigating and paying for it.

One ticket I had was a pre-launch verification. As part of that, I did a security scan of the code, and it found three major security issues. The only thing I thought about was that someone out there right now has deployed their vibe-coded project management tool with the most stupid vulnerability.

Testing

The Playwright MCP is freaking magical! Web browser testing automation is one of the most brittle things ever. Part is the flakiness of the technology. The other part is that it's too deterministic. If you move the button from one place to another, change its id, or update its text, bang, you broke the test. The site still works, but the tests fail. And then you spend more time fixing tests than actually fixing bugs or adding features. LLMs add the right level of non-deterministic approach to testing. It will look at the rendered page and reason over it.

Claude tested the registration flows countless times for me. It would open the browser, "click" around, take screenshots, verify against the plan, and fix what wasn't right. It wasn't super fast, but it worked without intervention.

One thing I didn't know was that Playwright can access the browser's console logs. One time, Claude decided that it couldn't figure out what was happening. It changed the code to log to the console, learned where the error was happening, and fixed the bug. Once, it even told me I had a Chrome extension that was generating an error, unrelated to my website.

I also had the regular unit tests, which are trivial for Claude to run and verify its own work.

GTM

Over the years, I've accumulated several email lists from previous events under the Seattle Flow brand. I also had Google Spreadsheet lists of potential sponsors, investors, startups, and all kinds of organizations and people. The biggest source of data was my email, though. After 25+ years, I have over 6,000 contacts.

First, a conference has two types of customers: attendees and sponsors. With Startup Day, it also has startups as customers for the Startup Fair, and vendors/providers for the Startup Kit.

I had never used a CRM before, and I gave it a try. I picked Clarify, founded by Patrick Thompson, one of the speakers for Startup Day. But I already had a Google spreadsheet with hundreds of entries. The spreadsheet plus dozens of CSVs were a mess.

I created a repo on GitHub to store all those files, and then used Claude Code to write all the scripts to merge, de-duplicate, remove old entries, and also call a service to verify if the email was likely active or not. After that, it generated several lists for me to import into CRM and for attendee outreach.

I also used Claude to help create the full marketing plan, based on key dates related to the event, and covering a wide range of tactics and mechanisms. I didn't automate most of this because I don't want Claude writing social media posts or sending emails to people. I just don't trust the quality at this point.

SEO & AEO

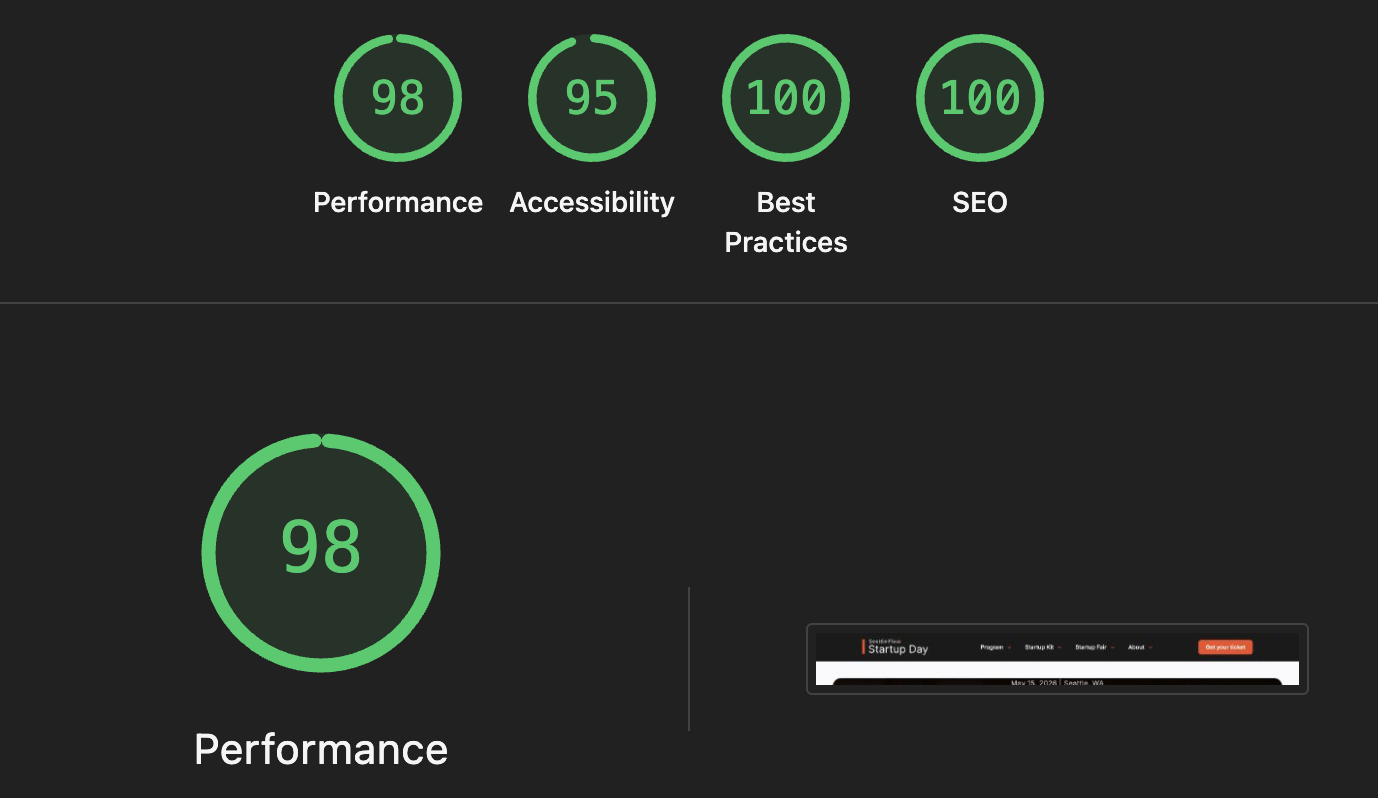

Showing up on a search engine and in the chat interface of an LLM is important for marketing. I thought Claude dropped the ball here. Unless I explicitly told it, it didn't apply best practices to the HTML. I had to tell it to add a robots.txt, which pages should have a no-cache/no-index, create a sitemap, add page meta tags (Open Graph, Twitter Cards, etc.), or create the JSON-LD entities.

That's a skill issue. Literally, I should have added a skill that taught it how to review the page for best practices on SEO.

Zero Lines

I often see people talking in awe of what they can achieve with Claude Code, and in my mind, I realize they are not asking enough of Claude. The real unlock is to push to the limit. I've been working on another project, the actual Seattle Flow, that's orders of magnitude more complex, but I've been shy in asking Claude to do too much. It has been one service/component at a time, with too much guidance from me.

What I realized by building the Startup Day website from scratch is that I can ask a lot more of Claude. In some cases, you want to keep a close grip on the system design because it's too hard to explain all the requirements and future uses you might have, but in others, it doesn't matter.

Next Time

I'm not sure there is much that I'd do differently next time. The skills I wrote have evolved since the start of this project. I liked how the tasks were stored on the repo as well because it made it trivial for me to search, open, and edit it in VS Code without fuss, and Claude would look up for what it did or in the backlog to understand things. It addressed a lot of the shortcomings around LLM memory. This wouldn't work for a large project with dozens of developers, but it worked really well for this one.

I spent way too much time on lower-priority items just because they were so much fun. I probably could have done everything in three days if I were working on it without distraction, and without the bells-and-whistles that I added along the way.

We live in the age of the SaaSpocalypse, but I don’t think Luma, Eventbrite, or other event websites have much to worry about. The amount of effort I put into creating this website it's not what most conference organizers would do, unless they manage very large conferences, in which case they aren't using those SaaS products, anyway.